Machine Learning: A Panacea for Improving the Energy Efficiency of a 5G Network

Keywords:

5G, Artificial Intelligence, Machine Learning, Python, Signal to noise ratioAbstract

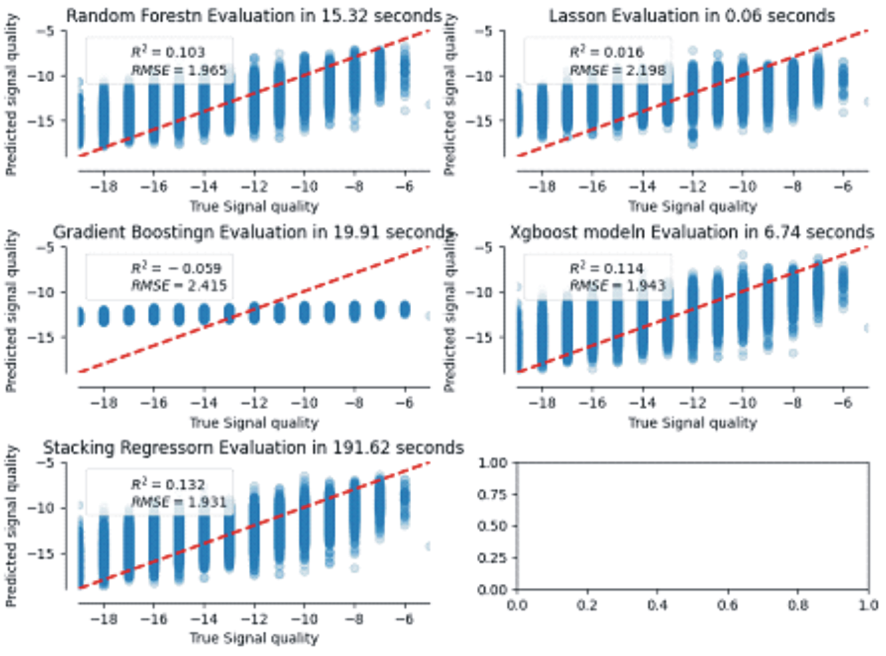

5G networks supports large number of devices and requires high data rates, needing much energy consumption. The need to efficiently manage this energy consumption using machine learning is a major drive for this research. A 5G production dataset was cleaned, normalized and analyzed using Python programming language. Correlation investigation results shows that the highest correlation value of 0.78 exists between the reference signal power and the reference signal received power of the neighbouring cells (nRxRSRP). Using the significance indicator, the signal to noise ratio was observed to be the most important feature of the production dataset. In determining the hyper-parameter tuning, in order to maintain good accuracy and avoid over-fitting when developing improved energy efficiency algorithms, it was found that the number of estimators should not exceed 25 and the maximum depth of gradient descent should not exceed 9. The cleaned dataset was trained, validated and various algorithms were developed. The ridge stacking regression algorithm which combines all the algorithm outputs put together, outperformed the individual algorithms with the least root mean square error (RMSE) value of 1.931 and maximum coefficient of correlation of 0.132 which measures how best the regression model fits into the data while the Xgboost was the best algorithm amongst all the individual models with RMSE value of 1.943 and value of 0.114.